NVIDIA Quantum MQM8790-HS2R 200G InfiniBand Switch Ongemanaged, 40-Poorts 16Tb/s, C2P Luchtstroom UFM Ready

Productdetails:

| Merknaam: | Mellanox |

| Modelnummer: | MQM8790-HS2R (920-9B110-00RH-0D0) |

| Document: | MQM8700 series.pdf |

Betalen & Verzenden Algemene voorwaarden:

| Min. bestelaantal: | 1 stks |

|---|---|

| Prijs: | Negotiate |

| Verpakking Details: | Buitenste doos |

| Levertijd: | Op basis van inventarisatie |

| Betalingscondities: | T/T |

| Levering vermogen: | Levering door project/batch |

|

Gedetailleerde informatie |

|||

| Model-NR.: | MQM8790-HS2R | transmissiesnelheid: | 10/100/1000Mbps |

|---|---|---|---|

| Poorten: | ≧ 48 | Technologie: | Infiniband |

| Transportpakket: | Verpakking | Handelsmerk: | Mellanox |

| Markeren: | NVIDIA Quantum InfiniBand switch 200G,40-poorts onmanaged InfiniBand switch,16Tb/s Mellanox netwerkswitch |

||

Productomschrijving

Krachtige vaste configuratie, niet beheerde switch met 40 poorten van 200 Gb/s (of 80 poorten van 100 Gb/s) met 16 Tb/s niet-blokkerende doorvoer, in-netwerk computing acceleratie en ultralage latentie — speciaal gebouwd voor HPC, AI-clusters en hyperscale datacenters. Ontworpen voor extern beheer via het NVIDIA UFM™ platform met C2P luchtstroomconfiguratie.

De NVIDIA Quantum MQM8790-HS2R is een niet beheerde 200G InfiniBand smart switch ontworpen voor grootschalige datacenterimplementaties waar gecentraliseerd fabricbeheer de voorkeur heeft. Als onderdeel van de NVIDIA Quantum QM8700-serie levert deze switch veertig 200 Gb/s poorten in een compacte 1U-vormfactor met 16 Tb/s geaggregeerde niet-blokkerende doorvoer en een cut-through latentie van minder dan 130 ns. In tegenstelling tot zijn beheerde tegenhangers is de MQM8790-HS2R geoptimaliseerd voor extern beheer via NVIDIA Unified Fabric Manager (UFM™), waardoor datacenteroperators moderne datacenterfabrics op schaal efficiënt kunnen provisioneren, monitoren, troubleshooten en onderhouden.

Elke 200 Gb/s QSFP56-poort kan worden gesplitst in twee onafhankelijke 100 Gb/s poorten, wat tot 80 poorten van 100 Gb/s connectiviteit biedt — ideaal voor top-of-rack implementaties met dubbele dichtheid. De MQM8790-HS2R beschikt over C2P (power-to-port) luchtstroom, dubbele redundante voedingen en volledige ondersteuning voor NVIDIA SHARP™ in-netwerk computing acceleratie.

- 200 Gb/s InfiniBand per poort– veertig QSFP56-poorten die 200G of 100G split-modi ondersteunen, niet-blokkerende architectuur.

- Niet beheerde SKU voor Externe Controle– Geen on-board Subnet Manager; ontworpen voor gecentraliseerd beheer via het NVIDIA UFM™ platform.

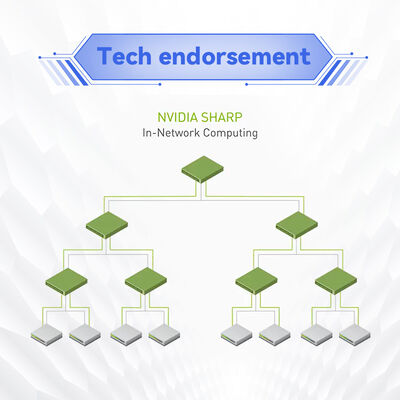

- In-Netwerk Computing Acceleratie– NVIDIA SHARP™ technologie maakt data-aggregatie binnen de switch mogelijk, waardoor de communicatietijd voor MPI, NCCL en SHMEM met ordes van grootte wordt verminderd.

- Hoge Radix & Splitsingscapaciteit– Converteer 40x 200G poorten naar 80x 100G poorten voor topologieën met dubbele dichtheid zonder extra switches.

- Geavanceerd Congestiebeheer– Adaptieve routing, statische routing en Quality of Service (QoS) om knelpunten te elimineren en de effectieve fabricbandbreedte te maximaliseren.

- Redundante & Hot-Swappable PSU– 1+1 redundante voeding, 80 Plus Gold gecertificeerd, ENERGY STAR conform, met energieoptimalisatie bij gedeeltelijk poortgebruik.

- C2P Luchtstroomconfiguratie– Power-to-port luchtstroomrichting ideaal voor datacenterkoelschema's die luchtstroom van achter naar voor vereisen.

- UFM Ready– Naadloze integratie met NVIDIA Unified Fabric Manager voor geavanceerde telemetrie, voorspellende analyses en geautomatiseerde fabricorkestratie.

- Achterwaarts Compatibel– Naadloze interoperabiliteit met eerdere InfiniBand-generaties (EDR, FDR).

NVIDIA Quantum switches integreren schaalbare hiërarchische aggregatie- en reductieprotocol (SHARP) engines direct in de siliconen. Gegevens die door de switch gaan, kunnen worden verwerkt – geaggregeerd, gereduceerd of uitgezonden – zonder meerdere retourreizen naar server-endpoints. Dit versnelt collectieve bewerkingen zoals all-reduce, barrier en broadcast dramatisch, die cruciaal zijn voor deep learning frameworks (TensorFlow, PyTorch via NCCL) en MPI-gebaseerde HPC-simulaties. Het resultaat is tot 10x prestatiewinst voor communicatie-intensieve workloads en verminderde CPU-overhead, waardoor computerbronnen vrijkomen voor daadwerkelijke applicatieverwerking.

De MQM8790-HS2R ondersteunt ook adaptieve routing- en congestiecontrole-algoritmen die automatisch verkeer over meerdere paden balanceren, wat bijna lijn-snelheid doorvoer levert, zelfs onder hoge belasting.

- Grootschalige AI & ML Clusters– GPU-gebaseerde systemen die gecentraliseerd fabricbeheer en telemetrie vereisen over honderden of duizenden knooppunten.

- High-Performance Computing (HPC)– Onderzoekslaboratoria, nationale laboratoria en universiteiten waar externe fabricmanagers verbeterde monitoring en automatisering bieden.

- Hyperscale Datacenters– Fat-tree, DragonFly+ en multidimensionale torus-topologieën beheerd via UFM voor maximale efficiëntie.

- Enterprise & Cloud Service Providers– Omgevingen die een uniforme controlelaag vereisen over meerdere switchfabrics.

- Top-of-Rack (ToR) met Gecentraliseerd Beheer– Dubbele dichtheid 100 Gb/s per serverconnectiviteit met fabric-brede zichtbaarheid, gebruikmakend van C2P luchtstroom voor koelontwerpen van achter naar voor.

De MQM8790-HS2R werkt naadloos samen met NVIDIA ConnectX-6, ConnectX-7 en BlueField DPU-adapters, en ondersteunt zowel InfiniBand als gemengde fabrics. Het is achterwaarts compatibel met eerdere InfiniBand-snelheden (EDR 100 Gb/s, FDR 56 Gb/s). Volledig interoperabel met bestaande NVIDIA Quantum fabric switches. Voor beheer is de switch ontworpen om te worden bestuurd via het NVIDIA Unified Fabric Manager (UFM) platform, dat uitgebreide fabric provisioning, monitoring en voorspellende probleemoplossing biedt. Ondersteuning voor besturingssystemen omvat belangrijke Linux-distributies (RHEL, Ubuntu, Rocky Linux) en NVIDIA-gecertificeerde GPU-servers.

| Parameter | Detail |

|---|---|

| Modelnummer | MQM8790-HS2R |

| Poorten & Snelheid | 40 QSFP56-poorten; tot 200 Gb/s per poort; ondersteunt splitsing in 80 poorten van 100 Gb/s |

| Totale Doorvoer | 16 Tb/s niet-blokkerend |

| Schakel Latentie | < 130 ns (cut-through) |

| Beheer | Niet beheerd — extern beheer via NVIDIA UFM™; on-board x86 dual-core CPU (Broadwell ComEx D-1508 2.2GHz), 8 GB systeengeheugen |

| Voeding | 1+1 redundant hot-swappable, 100-127VAC / 200-240VAC, 80 Plus Gold, ENERGY STAR |

| Luchtstroom | C2P (power-to-port) — MQM8790-HS2R, standaarddiepte |

| Afmetingen (HxBxD) | 1,7 x 17 x 23,2 inch (43,6 x 433,2 x 590,6 mm), 1U |

| Gewicht | Met 2 PSU's: 12,48 kg / 27,5 lbs |

| Bedrijfstemperatuur | 0°C tot 40°C |

| Certificeringen | CE, FCC, VCCI, ICES, RCMS, RoHS-conform |

| Garantie | 1 jaar beperkte hardwaregarantie (uitbreidingsopties beschikbaar) |

| Bestelbaar Onderdeelnummer (OPN) | Beschrijving | Luchtstroom | Beheer |

|---|---|---|---|

| MQM8790-HS2R | NVIDIA Quantum 200 Gb/s InfiniBand switch, 40 QSFP56, dubbele AC PSU, x86 dual-core, standaarddiepte, C2P luchtstroom, railkit | C2P (Power to Port) | Niet beheerd (UFM ready) |

| MQM8790-HS2F | Hetzelfde als hierboven, maar P2C luchtstroom (poort-naar-stroom) | P2C | Niet beheerd (UFM ready) |

| MQM8700-HS2F | Beheerde variant, P2C luchtstroom, on-board Subnet Manager, MLNX-OS | P2C | Beheerd (MLNX-OS) |

| MQM8700-HS2R | Beheerde variant, C2P luchtstroom, on-board Subnet Manager, MLNX-OS | C2P | Beheerd (MLNX-OS) |

Voor omgevingen die gecentraliseerd fabricbeheer vereisen over honderden of duizenden switches, biedt de MQM8790-HS2R een kosteneffectief, niet beheerd bouwblok geoptimaliseerd voor NVIDIA UFM-orkestratie, met C2P luchtstroom geschikt voor power-to-port koelconfiguraties.

- Gecentraliseerd Fabricbeheer– Gebruik NVIDIA UFM voor uniforme zichtbaarheid, automatisering en voorspellende analyses over de gehele fabric.

- Superieure ROI– Verminder kapitaaluitgaven met dubbele dichtheid 100G poortcapaciteit en een lager aantal switches voor grote fabrics.

- Energiezuinig– Dynamische energie-schaling op basis van poortgebruik, waardoor operationele kosten worden verlaagd.

- SHARP™ Acceleratie– Tot 10x snellere collectieve communicatie zonder host CPU-cycli te verbruiken.

- Schaalbare Topologieën– Native ondersteuning voor Fat Tree, DragonFly+ en Torus om datacentergroei toekomstbestendig te maken.

- Flexibele Luchtstroomopties– C2P-configuratie past bij koelarchitecturen van achter naar voor die gebruikelijk zijn in moderne datacenters.

- Bewezen Ecosysteem– Ondersteund door de cumulatieve softwarestack van NVIDIA en 24/7 partnerondersteuning.

Starsurge Group biedt end-to-end lifecycle services voor NVIDIA Quantum switches, inclusief pre-sales architectuurconsultancy, proof-of-concept testen en wereldwijde logistiek. Ons ervaren technische team biedt externe probleemoplossing, firmware-upgrades en RMA-coördinatie. Voor UFM-implementaties bieden we professionele services voor platformconfiguratie en integratie. Garantie-uitbreidingsopties en 24x7 prioriteitsondersteuning zijn op aanvraag beschikbaar. Meertalige ondersteuning voor EMEA, Amerika en APAC-regio's zorgt voor een snelle reactie voor bedrijfskritische implementaties.

- Zorg ervoor dat de omgevingstemperatuur tussen 0°C en 40°C blijft; zorg voor adequate rackventilatie.

- Gebruik alleen gekwalificeerde QSFP56 optiek of DAC-kabels die in de NVIDIA compatibiliteitsgids staan vermeld.

- Luchtstroomrichting: MQM8790-HS2R gebruikt C2P (power-to-port) — bevestig dat uw rackkoelschema overeenkomt met C2P luchtstroom (koude lucht van achteren).

- Voeding moet worden aangesloten op de juiste AC-spanning (100-240VAC) met aarding.

- Externe Subnet Manager (UFM of andere) moet worden geïmplementeerd voor fabricinitialisatie en beheer.

- Gewicht ~12,5 kg met twee PSU's — gebruik een geschikte mechanische lift voor rackmontage.

Hong Kong Starsurge Group Co., Limited is een technologiegedreven leverancier van netwerkhardware, IT-diensten en systeemintegratieoplossingen. Opgericht in 2008, bedient het bedrijf wereldwijd klanten met producten zoals netwerkswitches, NIC's, draadloze toegangspunten, controllers, bekabeling en infrastructuurapparatuur. Ondersteund door een ervaren verkoop- en technisch team, ondersteunt Starsurge industrieën zoals overheid, gezondheidszorg, productie, onderwijs, financiën en ondernemingen.

Met een klantgerichte aanpak richt Starsurge zich op betrouwbare kwaliteit, responsieve service en op maat gemaakte oplossingen. Als geautoriseerde partner voor toonaangevende netwerkmerken leveren we wereldwijde logistiek, aangepaste softwareontwikkeling en meertalige ondersteuning — waardoor klanten efficiënte, schaalbare en betrouwbare netwerkinfrastructuur kunnen bouwen.

| Component | Ondersteunde Modellen / Typen |

|---|---|

| Adapters | NVIDIA ConnectX-6, ConnectX-7, BlueField-2 / BlueField-3 InfiniBand |

| Kabels & Optiek | QSFP56 DAC (passief tot 3m, actief tot 5m), AOC, optische transceivers (SR4, LR4) |

| Besturingssystemen | Linux (RHEL 8/9, Ubuntu 20.04/22.04, Rocky Linux), Windows Server met InfiniBand stack |

| Beheerplatforms | NVIDIA UFM, OpenSM, andere standaard-compatibele Subnet Managers |

| Topologie Ondersteuning | Fat Tree, DragonFly+, 2D/3D Torus, SlimFly |

- Bevestig luchtstroomrichting: MQM8790-HS2R gebruikt C2P (power-to-port) — controleer de compatibiliteit van de rackkoeling (koude lucht van achteren).

- Verifieer de vereiste poortsnelheid (native 200G of 100G breakout) en het type kabelassemblage.

- Controleer de stroomingang: dubbele redundante AC met C13/C14 connectoren.

- Zorg ervoor dat de rackdiepte 23,2 inch (standaarddiepte) ondersteunt.

- Plan voor externe Subnet Manager: UFM-licentie of OpenSM-implementatie vereist voor fabricoperatie.

- Valideer de licentievereisten van de UFM-software als geavanceerde telemetriefuncties nodig zijn.

- NVIDIA Unified Fabric Manager (UFM) Platform Licenties

- NVIDIA Quantum QM9700 Serie (NDR 400G InfiniBand)

- NVIDIA ConnectX-6 VPI Adapterkaarten (100 Gb/s Dual-poort)

- NVIDIA BlueField-3 DPU voor infrastructuuracceleratie

- Starsurge Custom Rack Integratiekits & QSFP56 Kabels (passief/actief)